Imagine you had a friend who gave different answers to the same question, depending on how you asked it. “What’s the capital of Peru?” would get one answer, and “Is Lima the capital of Peru?” would get another. You’d probably be a little worried about your friend’s mental faculties

Answer to the first question is “Lima”, answer to the 2nd question is “Yes”. Those are different answers.

Many MANY years ago for some high school assignment we were supposed to find some short story/folk tale to read and then stand up and talk about it in class.

Somehow I found a folk tale about a guy walking home from town and he attracted the attention of some small devil.

It was a cold day and as the devil followed the man home it saw the man blowing on his hands and asked, “Why do you blow on your hands?”

The man replied, “Because my hands are cold.”

Making back home, with the little devil still following, the man makes himself something hot to drink. He blows on the hot beverage before taking a drink. The little devil asks, “Why do you blow on your drink?”, to which the man replied, “Because my drink is hot.”

At this point, the devil loses its shit, tells the man he’s insane and fucks right off.

That quote made me think of this story.

link pls

Link to what?

I’m 99.99% positive it was from some collection of folk tales. Only I can’t remember if it was some book that my mom had or from the school library.

the book or tale… but it seems it is not with you sadly… thanks for sharing though!

🤣

It never ceases to amaze me the amount of effort being put into shoehorning a probability machine into being a deterministic fact-lookup assistant. The word “reliable” seems like a bit of a misnomer here. I guess only in the sense of reliable meaning “yielding the same or compatible results in different clinical experiments or statistical trials.” But certainly not reliable in the sense of “fit or worthy to be relied on; worthy of reliance; to be depended on; trustworthy.”

Since that notion of reliability has to do with “facts” determined by human beings and implanted in the model as learned “knowledge” via its training data. There’s just so much wrong with pushing LLMs as a means of accurate information. One of the problems being that supposing they got an LLM to, say, reflect the accuracy of wikipedia or something 99% of the time. Even setting aside how shaky wikipedia would be on some matters, it’s still a blackbox AI that you can’t check the sources on. You are supposed to just take it at its word. So sure, okay, you tune the thing to give the “correct” answer more consistently, but the person using it doesn’t know that and has no way to verify that you have done so, without checking outside sources, which defeats the whole point of using it to get factual information…! 😑

Sorry, I think this is turning into a rant. It frustrates me that they keep trying to shoehorn LLMs into being fact machines.

I think the reason is that people want the type of interface LLMs provide. You can communicate with it using natural language, and it’s basically like having a human secretary that’s always around to help you out. I don’t think the fact that it is a stochastic engine is the fundamental problem actually. Human brain also works on probabilities and we obviously have the capacity to do tasks repeatedly with a high degree of reliability.

Humans build an internal model of the physical world through experience. We continuously learn how the world around us behaves, and this provides us with a shared context we lean on when communicating with each other. This context is crucial for being able to explain why you made a particular decision, and allows for error correction and guidance towards better decisions through conversation. This is largely what we mean by having understanding in a human sense. Meanwhile, LLMs rely on statistical analysis of data without any of the context inherent in human perspective. As a result, LLMs are unable to genuinely grasp the tasks it performs and make informed decisions due to its lack of this fundamental understanding.

I think that one approach towards allowing LLMs to effectively interact with humans would be to follow a similar process as with human child development. This involves training through embodiment and constructing an internal world model that allows the model to develop an intuition about how objects behave in the physical realm. Then we’d have to teach it language within this context. At that point the decisions it makes would be grounded in the same context that we all lean on, and we could interact with it in a meaningful way.

That’s an interesting take on it and I think sort of highlights part of where I take issue. Since it has no world model (at least, not one that researchers can yet discern substantively, anyway) and has no adaptive capability (without purposeful fine-tuning of its output from Machine Learning engineers), it is sort of a closed system. And within that, is locked into its limitations and biases, which are derived from the material it was trained on and the humans who consciously fine-tuned it toward one “factual” view of the world or another. Human beings work on probability in a way too, but we also learn continuously and are able to do an exchange between external and internal, us and environment, us and other human beings, and in doing so, adapt to our surroundings. Perhaps more importantly in some contexts, we’re able to build on what came before (where science, in spite of its institutional flaws at times, has such strength of knowledge).

So far, LLMs operate sort of like a human whose short-term memory is failing to integrate things into long-term, except it’s just by design. Which presents a problem for getting it to be useful beyond specific points in time of cultural or historical relevance and utility. As an example to try to illustrate what I mean, suppose we’re back in time to when it was commonly thought the earth is flat and we construct an LLM with a world model based on that. Then the consensus changes. Now we have to either train a whole new LLM (and LLM training is expensive and takes time, at least so far) or somehow go in and change its biases. Otherwise, the LLM just sits there in its static world model, continually reinforcing the status quo belief for people.

OTOH, supposing we could get it to a point where an LLM can learn continuously, now it has all the stuff being thrown at it to contend with and the biases contained within. Then you can run into the Tay problem, where it may learn all kinds of stuff you didn’t intend: https://en.wikipedia.org/wiki/Tay_(chatbot)

So I think there are a couple important angles to this, one is the purely technical endeavor of seeing how far we can push the capability of AI (which I am not opposed to inherently, I’ve been following and using generative AI for over a year now during it becoming more of a big thing). And then there is the culture/power/utility angle where we’re talking about what kind of impact it has on society and what kind of impact we think it should have and so on. And the 2nd one is where things get hairy for me fast, especially since I live in the US and can easily imagine such a powerful mode of influence being used to further manipulate people. Or on the “incompetence” side of malice and incompetence, poorly regulated businesses simply being irresponsible with the technology. Like Google’s recent stuff with AI search result summaries giving hallucinations. Or like what happened with the Replika chatbot service in early 2023, where they filtered it heavily out of nowhere claiming it was for people’s “safety” and in so doing, caused mental health damage to people who were relying on it for emotional support; and mind you, in this case, the service had actively designed it and advertised it as being for that, so it wasn’t like people were using it in an unexpected way from that standpoint. The company was just two-faced and thoughtless throughout the whole affair.

I very much agree with all that. Basically the current approach can do neat party tricks, but to do anything really useful you’d have to do things like building a world model, and allowing the system to do adaptive learning on the fly. People are trying to skip really important steps and just go from having an inference engine to AGI, and that’s bound to fail. The analogy between LLMs and short term memory is very apt. It’s an important component of the overall cognitive system, but it’s not the whole thing.

In regards to the problems associated with this tech, I largely agree as well, although I would argue that the root problem is capitalism as usual. Of course, since we do live under capitalism, and that’s not changing in the foreseeable future, this tech will continue being problematic. It’s hard to say at the moment how much influence this stuff will really have in the end. We’re kind of in uncharted territory right now, and it’ll probably become more clear in a few years.

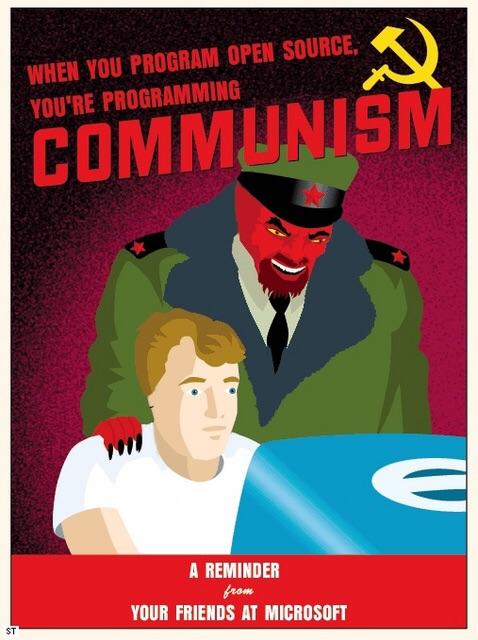

I do think that at the very least this tech needs to be open. The worst case scenario will be that we’ll have megacorps running closed models trained in opaque ways.

Eventually, the generator and the discriminator begin to agree more as they settle into something called Nash equilibrium. This is arguably the central concept in game theory. It represents a kind of balance in a game—the point at which no players can better their personal outcomes by shifting strategies. In rock-paper-scissors, for example, players do best when they choose each of the three options exactly one-third of the time, and they will invariably do worse with any other tactic.

This last sentence is completely wrong, yeah?

lol I did a double take on that as well when I was reading it

How game theory can make AI reliably kill you and everyone you love, to save companies money!